Citation: Am Sci 84 (2): 166–178 1996 Republished in Current Perspectives in Geology, Fourth Edition, Michael McKinney, Robert L. Tolliver, Parri Shariff, eds., Wadsworth, Boston, MA, 1998.

Keywords: Industrial ecology, dematerialization, material substitution, economics, zero emission, materials, life cycle, decarbonization

Areas of Research: Technology & Human Environment

Evolving efficiencies in our use of resources suggest that technology can restore the environment even as population grows

(NOTE: The color figures are at the end of this document for easier online reading)

Technologies have enabled us to expand our range and transform the earth. In 1909 Peary sledded to the North Pole, and in 1911 Amundsen reached the South. Improved navigational aids and ships that could withstand the pack ice made the poles accessible to men and dogs. Less than a century later we worry about the environmental purity of the polar regions and the ozone that shields them. My fundamental question is whether the technology that has conquered the earth can also spare it. To answer this question, I shall examine secular trends in what technology does with four paramount resources: energy, materials, land and water. I focus on the evolving efficiency of use of these resources. Economists call such resources “factors of production,” along with labor and capital.

Customarily, technology’s relation to environment is considered by evaluating lists of devices and machines: cars, oil tankers, nuclear power stations, windmills, wastewater-treatment plants, spray cans and chain saws. My approach is more basic. I ask whether technology enables us to obtain services more efficiently and, if so, at what rates. The answers indicate the feasibility of greatly diminishing our environmental burdens by increasing the productivity of our resources.

Analysts, eager to assimilate the latest information, live life on the tangent, extrapolating brief fluctuations to eternity. To counter this tendency, I search for stable signals amid the noise of the daily news. The historical analyses shared here, many contributed to an ongoing project at The Rockefeller University on technological trajectories and the human environment, seek the inherent lifetimes of processes of technological development, which can extend generations and centuries. Recognizing and formally analyzing incomplete developmental processes and the rhythmic patterns of processes permits confident prediction.

Identifying secular trends also enables me to frame answers to a second question: what distinguishes the last half-century or so with regard to environment and technology. The years around 1970 marked the maximum rate of growth of human population in modern times. Have we more generally passed a point of inflection in the curve of human development? Finally, what present actions will wave us toward sweet, greener days?

Two basic arguments weigh against technology. One is that technology’s success is self-defeating. Technology makes the human niche elastic. If we solve problems, our population grows and creates further, eventually insurmountable problems. The cardinal case is the conquest of death in developing countries. Public-health measures and modern medicine defeat mortality, while fertility declines at a much slower pace, and so population explodes. Before dosing, I shall consider technology’s relation to population. Population is always the catch.

The second argument contra-technology is the paucity of human wisdom. Technology creates handguns and hydrogen bombs, and these kill. We can use science and technology to provide goods and services for human sustenance and comfort and other purposes worthy for the planet. But technology powers good and evil. Some would feel more comfort with less power. I leave it to others to discuss the cultural controls to assure constructive use of science and technology.

A subordinate, manageable argument is that unanticipated consequences of the introduction of technologies diminish their value. Chlorinated fluorocarbons solved the problem of explosive and inefficient ammonia based refrigerators, but turned out 40 years after their introduction to threaten life’s stratospheric filter. The appropriate response is a feedback system: Assess technologies early in their prospective social penetration, watch them thereafter for surprises and tailor designs to fit changing needs and tastes.

I outline a global picture, with most detail from the United States. For more than a century the United States has on average adopted technologies earliest, diffused them fullest and documented the outcomes. The symptoms and cures show.

Energy

Energy systems extend from the mining of coal through the generation and transmission of electricity to the artificial light that enables the reader to see this page. For environmental technologists, two central questions define the energy system. First, is the efficiency increasing? Second, is the carbon used to deliver energy to the final user declining?

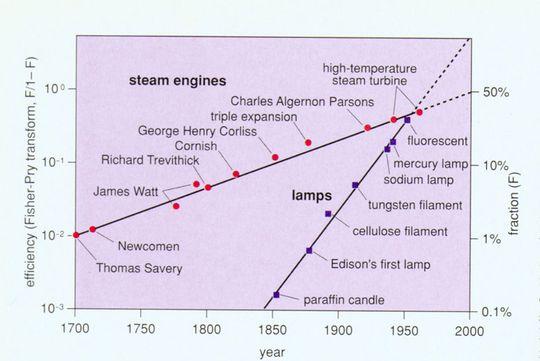

Energy efficiency has been gaining in many segments, probably for thousands of years. Think of all the designs and devices to improve fireplaces and chimneys. Or consider the improvement in motors and lamps (Figure 2). About 1700 the quest began to build efficient engines, at first with steam. Three hundred years have increased the efficiency of generators from 1 to about 50 percent of the apparent limit, the latter achieved by today’s best gas turbines. Fuel cells can advance efficiency to 70 percent. They will require about 50 years to do so, if the socio-technical clock continues to tick at its established rate. In 300 years, physical laws may finally arrest our engine progress.

Whereas centuries measure the struggle to improve generators, lamps brighten with each decade. A new design proposes to bombard sulfur with microwaves. One such bulb the size of a golf ball could purportedly produce the same amount of light as hundreds of high-intensity mercury-vapor lamps, with a quality of light comparable to sunlight. The current 100-year pulse of improvement evident in Figure 2 will surely not extinguish ideas for illumination The next century may reveal quite new ways to see in the dark For example, nightglasses, the mirror image of sunglasses, could make the objects of night visible with a few milliwatts.

Segments of the energy economy have advanced impressively toward local ceilings of 100 percent efficiency. However, modem economies still work far from the limit of system efficiency because system efficiency is multiplicative, not additive. In fact, if we define efficiency as the ratio of the theoretical minimum to the actual energy consumption for the same goods and services, modern economies probably run at less than 5 percent efficiency for the full chain from extracting primary energy to delivery of the service to the final user. So, far from a ceiling, the United States has averaged about 1 percent less energy to produce a good or service each year since about 1800. At that pace of advance, total efficiency will still approach only 15 percent by 2100. Because of some losses difficult to avoid in each link of the chain, the thermodynamic efficiency of the total system in practice could probably never exceed 50 percent. Still, in 1995 we are early in the game.

What about the decarbonization of the energy system? Carbon matters because it blackens lungs, causes air pollution and oil spills and regulates climate. Carbon is also a surrogate for sulfur, heavy metals and other environmental bads that attach to it in the dirty fossil fuels. Carbon enters the energy economy bonded with hydrogen as wood (and other biomass), coal, oil and natural gas. Per unit of energy, wood weighs most heavily in carbon, followed by coal, and then oil, with natural gas following as much the lightest.

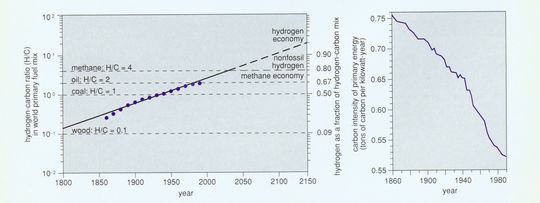

One can measure decarbonization in several different ways. The upper graph in Figure 4 shows the changing carbon intensity of primary energy for the world, where tons of carbon are divided by the total energy produced. This perspective shows that the long-term rate of decarbonization of the energy system is about 0.3 percent per year. Plentiful natural gas, efficient turbines and thrifty end-use devices promise more energy delivered with less carbon during the next decades.

Uranium also decarbonizes. At the end of 1993 432 operating nuclear reactors prodded almost 20 percent of the world’s electricity. Even if a fraction of the 48 listed in 1994 as under construction never operate, the remainder assure a continuing nuclear contribution to decarbonization. The radioactive reactor products, which are toxic and also hard and slow to degrade, and potentially powerful explosives, must of course be safely isolated. Solar sources also decarbonize but continue to stumble over obstacles in energy storage and transport.

Consider decarbonization also as the diminishing carbon intensity of the economies of a range of countries. Measured as the ratio of kilograms of carbon to gross domestic product and taking into account fuelwood and other renewable sources of energy, the decarbonization of dozens of nations studied, including Turkey, Thailand and China as well as the United Kingdom, Germany and Japan, has advanced almost in parallel. Countries begin at different times from different situations, but once they begin to decarbonize, they advance at about the same rates, and irreversibly, so far. Between 1970 and 1993, even the gas-guzzling United States more than doubled the ratio of its income to carbon use, decarbonizing about 3 percent per year. The spectrum of achievement, from about 3 kilograms of carbon per dollar of output in China to less than 0.2 in Japan and France, shows the distance most of the world economy stands from leading practice. The carbon intensity of the Chinese and Indian economies resembles the Japanese, American and European at the outset of industrialization in the 19th century.

Fundamentally, decarbonization tracks a technological competition between combustible elements. In the hydrocarbons, the truly desirable element for energy generation is not the carbon but the hydrocarbon. The evolution of the atomic ration of hydrogen to carbon in the world fuel mix displays the gradual and unrelenting penetration of the energy market by the number one element of the periodic table (Figure 4, bottom).

All these analyses imply that during the next 100 years the human economy will clear most of the carbon from its system and move, via natural gas, to a hydrogen metabolism, Hydrogen, fortunately, is the immaterial material. It can be manufactured from something abundant, namely water, it can substitute for most solid, liquid and gaseous fuels in use, and the product of its combustion, water vapor, does not pollute. The next decades will see a vigorous growth in the hydrogen industry. Nightly nuclear heat seeking a market outlet can efficiently steam-reform natural gas into hydrogen and carbon dioxide, the latter permanently reinjected into the gas fields from whence it came. Later, heat, nuclear or solar, can neatly decompose water.

Hydrogen, of course, requires a partner, electricity, to provide action at a distance in a clean energy system. Since Edison began the commercial industry in the 1880s, the electrical system has grown in two neat pulses each lasting about 50 years, synchronized with long cycles of economic growth. A new pulse of growth should soon begin, in which electricity powers not only more information products but also more of the transport system, using linear motors. The magnetically levitated train soon to operate between Hamburg-Berlin inaugurates the way.

Combining analyses of efficiency and decarbonization startles many with the fact that national energy systems ranging from India to South Korea to France are heading in the right direction, toward micro-emissions. The way is long, but we are on the light path.

Land

Of all human activities, agriculture transforms the environment most widely. Corps and pasture occupy at least one-fifth the land surface, at least ten times as much as cities, towns and roads. Agriculture has consumed forests, drained wetlands, erased habitats and favored some plants over others in fierce green warfare. Farms, of course, also feed us.

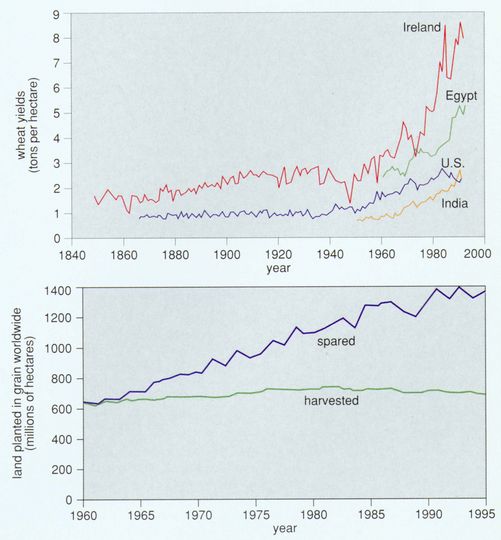

Yields per hectare measure the productivity of land and the efficiency of land use. To 1940, yields per hectare of most crops advanced little, and more mouths required more land to feed them. During the past half century, ratios of crop to land for the world’s major grains-maize, rice, soybean and wheat have climbed, fast and globally. The rise in wheat in India, Egypt, Ireland and the U.S. shows the inception and the spread of the trend (Figure 6, top).

A cluster of innovations including tractors, seeds, chemicals and irrigation, joined through timely information flows and better organized markets, raised yields to feed billions more without clearing new fields. In fact, since mid-century global cropland has remained stable. Expansion in developing countries has offset contraction in Europe and North America.

As the century draws to a close, the earth is at a historic turning point in land use. The continuing diffusion of high yields and efficient land use permits the absolute reversal of the destruction of nature that has occurred for many centuries.

Societies chronically fear exhaustion of the potential to increase food supply. In reality, the agricultural production frontier is still spacious, even without invoking the engineering of plants with new molecular techniques. For many decades in Iowa, while yields have risen steadily, the average corn grower has managed only half the yield of the Iowa master grower, and the world grows only about 20 percent of the top Iowa farmer. The production ratio of the performers has not changed much since 1960. In Iowa the average performer lags more than 30 years behind the state of the art.

Even where diffusion proceeds at a moderate pace, the effects accumulate dramatically. In India, for example, by raising wheat yields farmers spared 42 million hectares, about the size of Sweden or California, if we compare the land actually harvested in 1991 with the land the farmers would have harvested at 1961-66 yield for the actual production. Globally, the land spared since 1960 by raising yields of grain, which make up more than half of all calories, equals the Amazon basin (Figure 6, bottom).

A single-minded concentration on land raises concern that side effects will harm the nature we seek to preserve. In fact, land requires little more clearing, tilling and cultivating for high yields than for low ones. Protecting lush foliage needs little more pesticide and usually less herbicide than sparse foliage. Luxuriant foliage also protects soil better from erosion. The law of diminishing returns applies to fertilizers, which farmers tend to use abundantly. In many areas yield gains now come by optimizing inputs such as nitrogen and phosphorus in step and lowering total application. In sum, careful management of the land we do use is likely to diminish the total fallout from food production. Most fallout is coextensive with land used.

What is a reasonable outlook for the land cropped for future population? Future calories per capita will likely lie between the 3,000 per day of a vegetarian diet and the 6,000 that include meat (counting dietary calories plus the calories fed to food and draft animals and not recovered in milk, meet and so on). Let us consider, as Paul Waggoner has done (Waggoner 1994) how much cropland a population of 10 billion, almost twice the present, could spare for wilderness or other purposes with that range of calories per capita. If farmers fail to raise global average yields from the present 2 tons grain equivalent per hectare, people will have to lower their daily portions to 3,000 calories to avoid further land clearing. But Irish wheat and American corn now average 8 tons per hectare. If farmers can lift the global average to 5,10 billion people on average can enjoy the diet 6,000 calories bring, and spare a quarter of the present 1.4 billion hectares of cropland. The quarter spared is about twice the size of Alaska. If future farmland on average yielded today’s U.S. corn, 10 billion eating an American diet could allow cropland the area of Australia to revert to wilderness.

Per hectare, annual world grain yields in fact rose 2.15 percent 1960-1994. If dynamics continue as usual, farmers will grow 8 tons per hectare around 2060, at the end of the decade in which the United Nations projects population to reach 10 billion From the Great Plains of America to the Great Plains of China, reversion of farms and ranches to woods and grasses will be a spreading, major environmental feature of the next decades, and beyond. And governments will avidly seek rationales to subsidize agriculture to keep it from contracting more rapidly than culture will allow.

Materials

We can reliably project more efficient energy, decarbonization and effectively landless agriculture. What about a companion dematerialization? I will define dematerialization primarily as the decline over time in weight of materials used to perform a given economic function.

Dematerialization would matter enormously for the environment. Excluding water and oxygen, in 1990 each American mobilized on average about 50 kilograms per day. Reducing the materials intensity of the economy could preserve landscapes and natural resources, lessen garbage and reduce human exposures to hazardous materials.

Over time new materials substitute for old. Successful new materials usually show improved properties per ton, thus leading to a lower intensity of use for a given task The idea is as old as the epochal succession from stone to bronze to iron. Our century has witnessed the relative decline of wood and the traditional metals and the rise of aluminum and especially plastics (Figure 7, top).

Modern examples of dematerialization abound. Since the early 19th century, the ratio of weight to power in industrial boilers has decreased almost 100 times. Within the steel industry, powder metallurgy, thin casting, ion-beam implantation and directional solidification as well as drop and cold forging have allowed savings up to 50 percent of material inputs in a few decades. In the 1970s a mundane invention, the radial tire, directly lowered weight and material by one-quarter below the bias-ply tires they replaced. An unexpected and bigger gain in efficiency came from the doubling of tire life by radials, so halving the use of material (and the piles of tire carcasses blighting landscapes and breeding mosquitoes). Lightweight optical fibers with 30 to 40 times the carrying capacity of conventional wiring and invulnerability to electromagnetic interference are ousting copper in many segments of the telecommunications infrastructure. The development of high-fructose corn syrup (HFCS) in the 1960s eliminated sugar from industrial uses in the United States. HFCS has five times sugar’s sweetening power on a unit-weight basis, with a proportional impact on agricultural land use.

Certainly many products–for example, cars, computers and containers–have become lighter and often smaller. Compact discs selling for less than $100 now contain 90 million home phone numbers of Americans, equivalent to the content of telephone books once costing $60,000 and weighing 5 tons. At midcentury, glass bottles dominated. In 1953 the first steel soft-drink can was marketed. Cans of aluminum, one-third the density of steel, entered the scene a decade later and by 1986 garnered more than 90 percent of the beer and soft-drink market. Between 1973 and 1992 the aluminum can itself lightened 25 percent. In 1976 polyethylene terephthalate resins began to win a large share of the market, especially for large containers previously made of glass.

Recycling, of course, diminishes the demand for primary materials and may thus be considered a form of dematerialization. No longer limited to resource-poor individuals and regions, during the past couple of decades recycling has regained standing as a generalized social practice in the U.S. and other societies with huge material appetites.

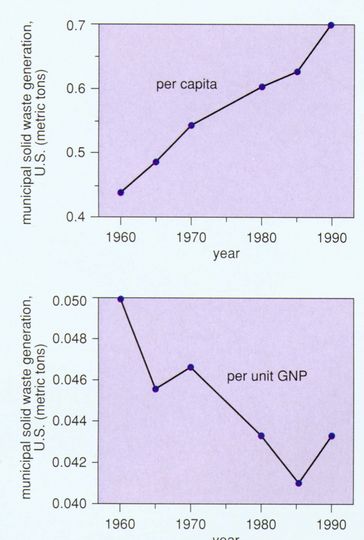

Difficulties arise in the more complex “new materials society” in which the premium lies on sophisticated materials and their applications. Alloys and composites with attractive structural properties can be hard to separate and recycle. Popular materials can be lighter but bulkier or more toxic. Reuse of plastics may be less economical than burning them (cleanly) for fuel or otherwise extracting their chemical energy. Most important, economic and population growth has multiplied the volume of products and objects. Thus, total wastes have tended to increase while declining per unit of economic activity (Figure 7, bottom).

By weight, construction materials make up about 40 percent of the materials Americans consume and thus form a significant metric. Although absolute use of physical-structure materials by weight has fluctuated, consumption per unit of economic activity has trended downward since 1970. Because energy materials such as petroleum constitute another 40 percent of our materials diet, increases in energy efficiency could also markedly dematerialize economies.

As yet, trends with respect to dematerialization are equivocal. Better and more complete data on materialization and dematerialization over long periods for the United States and the rest of the world need to be assembled and analyzed. Moreover, the heterogeneity of purpose of materials will never permit the performance of the materials sector to be summarized as simply as kilowatts and carbon can summarize energy or tons per hectare summarize land. A kilogram of iron does not compare with one of arsenic. But the promise dearly exists for what Robert Frosch, I and our colleagues call a superior “industrial ecology,” in which the materials intensity of the economy declines, wastes lessen and the wastes that are created become nutritious in new industrial food webs.

Water

We can get more value from each unit of energy, land and material. Can we squeeze more from a drop of water?

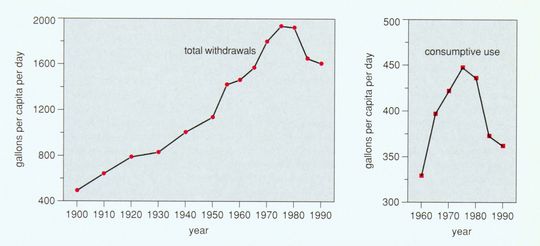

Total per capita water withdrawals quadrupled in the United States between 1900 and 1970, and overall personal consumption increased by one-third between just 1960 and the early 1970s (Figure 9). However, since 1975, per capita water use has fallen appreciably, at an annual rate of 1.3 percent. Absolute water withdrawals peaked about 1980.

Industry, alert to technology as well as costs, exemplifies the progress, although it consumes a small fraction of total water. Total industrial water withdrawals plateaued a decade earlier than total U.S. withdrawals and have dropped by one-third, more steeply than the total. More interesting, industrial withdrawals per unit of GNP (in 1982 dollars) have dropped steadily since 1940, when 14 gallons of water flowed into each dollar of output. Now the flow is less than 3 gallons per dollar. The steep decline taps many sectors, including chemicals, paper, petroleum refining, steel and food processing. After adjusting for production levels, not only intake but discharges per unit of production are perhaps one-fifth of what they were 50 years ago.

In manufacturing, technology as well as law and economics have favored frugal water use. More efficient use of heat and water usually go together, through better heat exchangers and the recirculation of cooling water. Legislation, such as the U.S. Clean Water Act of 1972, encouraged reduction of discharges and recycling and conservation as well as shifts in relative prices. Although water treatment may cost only about 5 percent of production, wastewater-treatment systems are expensive capital investments.

Despite the gains, the United States is far from most efficient practice. Water withdrawals for all users in the countries making up the Organization for Economic Cooperation and Development range tenfold, with the U.S. and Canada the highest. Allowing for differences in major uses (irrigation, electrical cooling, industry, public water supply), large opportunities for reductions remain. In the late 1980s over 90 percent of measured U.S. hazardous wastes were still wastewaters.

In the long run, with much higher thermodynamic efficiency for all processes, removing impurities to recycle water will require small amounts of energy. Dialytic membranes open the way to such efficient purification systems. Because hydrogen will be, with electricity, the main energy carrier, its combustion (if from seawater) may eventually provide another important source of fresh water, perhaps 200 liters per person per day at the level of final consumers, about one-fourth the current withdrawal in water-prudent societies such as Denmark. Importantly, as agriculture contracts spatially and irrigates more frugally; its water demand will shrink.

Population

l have demonstrated a revolution in factor productivity, whether energy, land, materials or water. The game to get more from less is old. In energy, global progress is documented for centuries. With land, the Chinese started long ago, but most of the world began only about 1940. 1940 also appears to have marked a crossing point for new materials. In water, U.S. industry joined the search about 1940, and the population more generally about 1970.

The catch for homo faber is that our technology not only spares resources but also expands our niche. Technology further adds to population by increasing longevity and decreasing mortality. Although fertility has also declined greatly; the role of new birth-control technologies in the decline has been small. Feedbacks may well also occur between population growth and density on the one hand and invention and innovation on the other.

Population provides a multiplier that determines total consumption. So far I have stressed ratios, not absolutes.

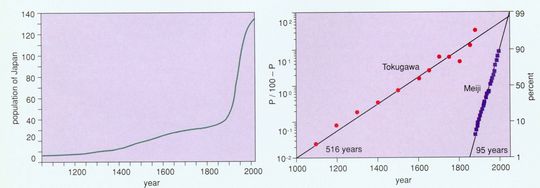

To see graphically how technology can change carrying capacity, consider the population history of Japan. From the establishment of the Tokugawa Shogunate about 1600 Japan insulated itself from outside technology until 1854 when American Commodore Matthew Perry reopened trade. In 1868 the Meiji restoration lessened the isolationist policy of the former imperial party, and Japan entered a period of great borrowing from the Occident. As evident in Figure 10, Japanese population growth since 1100 sorts perfectly into two pulses of growth. Tokugawa technology (and culture) and its medieval predecessors accommodated a gradual addition of 28 million over about five centuries to Japan’s earlier population of about 5 million. Meiji and Western technology keyed the opening of the niche to another 100 million or so in one century.

Reasoning about the link between technology and carrying capacity from the Japanese case, my colleague Perrin Meyer and I have speculated about the growth of the population of the U.S. We hypothesize a sequence of overlapping pulses of population growth centered on times of rapid economic expansion, the midpoints of tentatively identified 50-yearlong waves of economic growth. Technological innovations affecting resources, processes and products cluster in each economic wave and expand carrying capacity. The first pulse of population growth associates with wood, iron, steam, canals, and wool and cotton textiles; the second with coal, steel, railways, telegraphy and early electrification, and the third with oil, plastics, autos, widespread electrification, telephony, computers and pharmaceuticals. The fourth, emerging pulse revolves around natural gas, aviation and a host of information and molecular technologies. Daring to extrapolate our reasoning with a “superlogistic” curve using the center points of the growth pulses as the base points, we find the U.S. population saturating around 400 million in 2100, a total consistent with projections made by conventional demographic methods.

Clearly the limits to human numbers keep shifting. In any case, analysis of historic population data shows that the global rate of growth peaked at about 2.1 percent per year around 1970, as noted near the outset of this article. Fertility rates, the key factor, have been falling in most nations and are below the levels needed to replace current population in Europe and Japan. The difficulty is that we have no logic to predict future fertility, and simply fitting an equation, as we did for the U.S., is chancy. Globally, the pervasive economic and social effects of the information revolution could allow the increase in human numbers to 15 or 50 or 100 billion, or influence the fertile to choose not to reproduce. The question of future population appears quite open, as reflected in the spray of projections.

Conclusion

Population frames the challenge for green technologists. To maintain current levels of cleanliness with the 50 percent increase in population I think likely for the United States and the current level and kind of economic activity, emissions per unit of activity would need to drop by one-third. That is an easy target. An improvement of 1.5 percent per year reaches the target by 2020, 80 years early.

The challenge is much harder taking into account growing consumption. If economic activity doubles per capita roughly every 30 years, as it has since about 1800 in the industrialized countries, the result is an eightfold increase by 2100. Multiplied by population, the United States would have 12 times today’s emissions and demands on resources, other things being equal. This scenario of the “dirty dozen” requires micro- or zero emissions per unit of economic activity to maintain or enhance environmental quality In other words, Americans need to clean processes by more than one order of magnitude. More reassuringly, the annual cleaning need be about 2.5 percent.

In Europe and Japan population is stable or even shrinking, easing the magnitude of their environ mental challenges. The rest of the world, where most people live, faces the twin pressures of enlarging economies and populations. So in absolute terms the technical gains must be enormous.

But we have seen the outlines of how the gains can be made. In the long run, we need a smoke free system of generating hydrogen and electricity that is highly efficient from generator to consumer, food decoupled from acreage, materials smartly designed and selected for their uses and recycled, and carefully channeled water. In short, we need a lean, dry, light economy.

In truth, I exaggerate the challenge. With respect to consumption, multiplying income will not cause an American to eat twice as much as today in 2020 or eight times more in 2100, and even a mouth moving today from Lima to Los Angeles only triples its original caloric intake. With respect to production, history shows that the economy can grow from epoch to epoch only according to a new industrial paradigm, not by inflating the old. High environmental performance forms an integral part of the modern paradigm of total quality. The past half-century signals the preferred directions: the changeover from oil to gas, the contraction of crops in favor of land for nature, the development of a new ecology of materials use in industry, and diffusion of more efficient water use to farmers and residents as well as industries.

Economists always worry about trading off benefits in one area for costs in another. Hearteningly, we have seen that in general efficiency in energy favors efficiency in materials; efficiency in materials favors efficiency in land; efficiency in land favors efficiency in water; and efficiency in water favors efficiency in energy. The technologies that will thrive, such as electricity, will concert higher resource productivity. Prone to fail is a technology, such as biomass farming for energy, which brings into conflict the goal to spare land with the goal to spare carbon.

Some worry that the supply of a fifth major resource, ingenuity, will run short. But nowhere do averages appear near the frontier of current best practice. Simply diffusing what we know can bring gains for several decades. Moreover, science and technology are young. Aggressively organized research and development (R&D) is another innovation of the past 50 years. Many industries have systematized their search for better practice (“endogenized R&D” in the economics jargon) and have the productivity gains to show for it. Other industries, including much of the service sector which now forms the bulk of modern economies, and the enlarging public and non-profit sectors have improved slowly. Overall, society hardly glimpses the theoretical limits of performance.

Inevitably, sectors and societies will advance at unequal pace. We will continue to have laggards as well as pioneers. Problems will arise from the distribution of goods, the actions and interactions of bads, shocking and poorly tailored innovations, and social traps such as the well-known “tragedy of the commons,” which today sadly entangles the wild stocks of fish. Yet the long history of technical progress and its reach into more sectors during recent decades encourage. Perhaps the first Earth Day in 1970 was an inflection point.

Policy can interfere wastefully with dynamics-as-usual, where they are benign. For example, decarbonization mandates the phasing out of the coal industry worldwide over the next decades; the political system might prudently assist those who lose their livelihoods, but not with dollars for actual coal. Wise policy favors science, experimentation and fluidity, while addressing inequity and insecurity and insuring against catastrophe.

Families named Smith, Cooper, and Miller people our nation because until not long ago most of us beat metal, bent casks, and ground grain. Now few workers hold such jobs. So far, except in video, we are not named Programmer, Sub-Micron, and Genesplicer. We easily forget how much the modem world has changed and yet how early our day is. We forget the power of compounding our technical progress, even at one or two percent per year. Knowledge can grow faster than population and provide abundant green goods and services. The message from history is that technology, wisely used, can spare the earth. You can click on it.

Acknowledgments

The author thanks Arnold Grübler, Raphael Kasper, Robert Kates, Alan McGowan, Perrin Meyer, Nebojsa Nakicenovic, Donald Rogich, Paul Waggoner and Iddo Wernick.

FIGURES

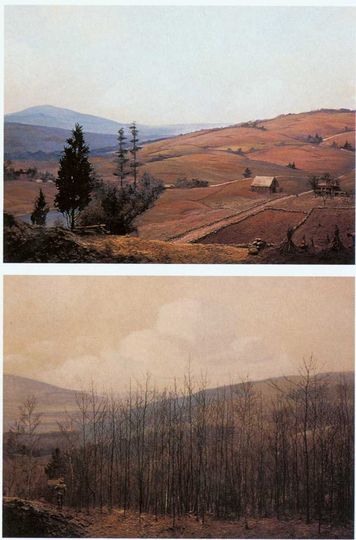

Figure 1. Advances in technology enable people to obtain services more efficiently. Greater efficiency in our use of energy, materials, land and water could help diminish the burdens people place on the environment. One possible future is captured by the vision of the “buffalo commons”: jobs would concentrate in urban areas (as, for example, at busy Dallas-Forth Worth Airport, above, where 37,000 people work) as vast lands in the interior of North America return to a wild state. Restoration of tall grass prairie in the Midwest, as at the Konza Prairie Research Natural Area in Kansas (also above), has been guided by conservation organizations and will accelerated as highly productive agriculture frees additional land. (Above photograph courtesy of Dallas Fort Worth Airport.)

Figure 2. Energy efficiency is a tern of modern invention, but the efficiency of energy-conversion technologies has been increasing for hundreds and probably thousands of years. Improvements in motors and lamps are analyzed here as a logistic (sigmoid) growth process with a linear transform that normalizes the data to ease comparison. (From Ausubel and Marchetti, in press.)

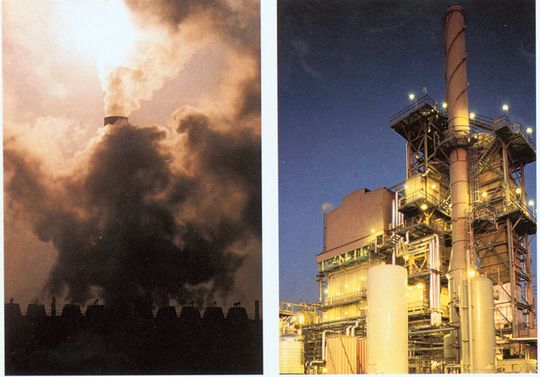

Figure 3. Decarbonization of the world’s energy mix moves the economy from dependence on carbon-heavy fuels, responsible for black lung, oil spills and large releases of climate-changing greenhouse gases. A power station in Bitterfeld, Germany (kft), burned lignite, or “brown coal,” contributing to Eastern Europe’s severe air-pollution problem. The author envisions a cleaner energy economy based on hydrogen, whose combustion produces water vapor. At right a California plant produces hydrogen by steam reforming of natural gas. (Right photograph courtesy of Air Products and Chemicals, Inc.)

Figure 4. World primary energy sources have declined in carbon intensity since 1860. The evolution is seen in the ratio of hydrogen (H) to carbon © in the world fuel mix, graphed on a logarithmic scale, analyzed as a logistic growth process and plotted in the linear transform of the logistic curve (left). After Marchetti 1985). Wood has an effective hydrogen-to-carbon ratio of 0.1, coal 1, oil 2, and natural gas 4. Progession of the ratio above natural gas (methane, CH4) requires production of large amounts of hydrogen fuel without fossil energy. Carbon intensity can also be calculated as the ratio of the sum of the carbon content of all fuels to the sum of the energy content of all primary energy sources (right). For such a calculation carbon emission in tons per kilowatt-year average: wood, 0.84, coal, 0.73; oil 0.55; and gas, 0.44. (From Nakicenovic, in press).

Figure 5. Much American land cleared by early settlers has reverted to nature as a result of changes closely related to technological progress and the increasing ability to grow more crops per acre. As better transport and machines made farming the rich soils of the Midwest highly profitable, New England farmers abandoned the rocky fields they had cleared. Dioramas on display at the Harvard Forest in Petersham, Mass., document the return of New England to forest. This pair shows the landscape around Petersham in 1830, at the height of cultivation, and a century later, when volunteer pines and the maturing hardwoods that followed them filled the landscape. (Photographs courtesy of the Fisher Museum at Harvard Forest.)

Figure 6. Rising yields of wheat on four continents (top) illustrate progress in agricultural productivity. Improved yields have allowed the global area actually harvested for grain to remain stable at around 600 million hectares (bottom). “Land spared” is the amount of land that would have been needed to produce actual grain crops with the 1960 average yield. (Data from Mitchell 1980, Yearbooks of the Food and Agriculture Organization and the U.S. Department of Agriculture’s “PS&D View,” a database.)

Figure 7. Countervailing trends can be detected in the use of materials in the US. In the top graph, production data are divided by the Gross National Product in constant (1982) dollars and normalized to 1940. The use of heavy materials such as steel has been supplanted in the economy by lighter materials, especially plastics. Since 1970 even aluminum and the agricultural minerals, phosphates and potash, have declined in relative use. Municipal solid-waste generation, however, has grown steadily on a per capita basis. In relation to GNP, solid-waste generation dropped 1960-19185 but climbed again recently. (From Wernick et al., in press. Data from U.S. Bureau of the Census 1975 and 1993; U.S. Environmental Protection Agency 1992.)

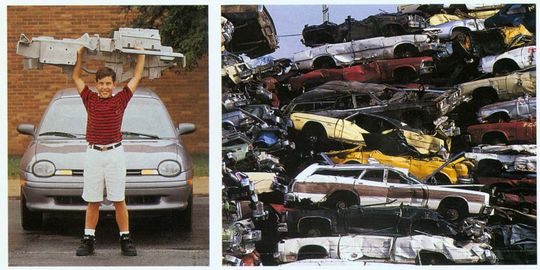

Figure 8. Auto manaufacturing today uses plastics and lightweight metals, exemplifying dematerialization, the trend toward higher materials and products per unit of economic activity. A Chrysler demonstration car, the Neon Lite, includes a lightweight instrument panel made of magnesium (left). Yet total wastes have risen in the U.S. with economic and population growth. At right, crushed automobiles pile high at a Philadelphia scrap-metal reclamation center. (Left photograph courtesy of Chrysler Corporation.)

Figure 9. Total per capita water withdrawals quadrupled in the U.S. between 1900 and 1970, and overall personal consumption (right) increased by one-third between 1960 and the early 1970s. Since 1975, however, per capita water use has fallen annually 1.3 percent. Industrial withdrawals per unit of GNP have dropped steadily since 1940, encouraged by technology as well as law and economics. Data from other nations show that the U.S. is far from most efficient practice. (Data from U.S. Bureau of the Census 1975 and 1993.)

Figure 10. History of Japanese population growth shows how technology changes carrying capacity. Under the Tokugawa Shogunate from about 1600 to 1854, Japan insulated itself from outside technology. The right-hand graph decomposes the population data into a pair of logistic growth pulses in linear form. The pulse the Tokugawa Shoguns took to its culmination was centered in 1537, required 516 years to grow from 10 percent to 90 percent of its extent, and saturated at 28 million people (on top of a pre-existing level of 5 million). The Meiji pulse, centered in 1950, required 95 years, and is saturating now with an addition of 103 million. (Meyer 1994. Data from Tsuneta Yano Memorial Society 1993 and Taeuber 1958.)

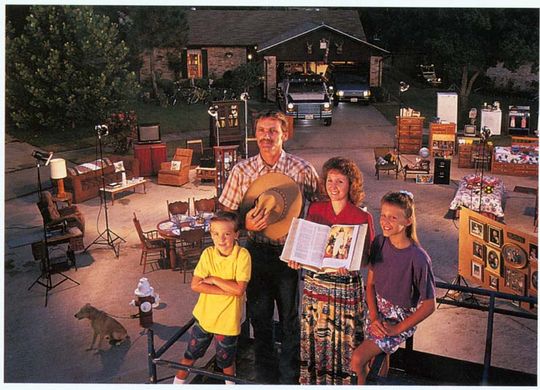

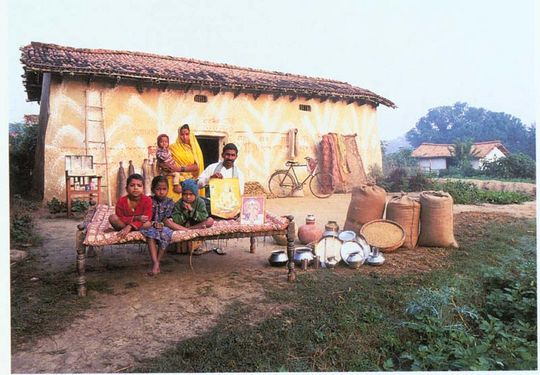

Figure 11. Growing consumption raises the challenge of saving resources through technology. Since about 1800 in industrialized countries, economic activity has doubled per capita roughly every 30 years. The contrast between consumption in developed and developing countries is illustrated by the “global family portrait” developed by the Material World project, which photographed typical families with their possessions around the world. Shown here are the Skeen family of Pearland, Texas, and the Yadev family of Ahraura, India. (From Material World, Sierra Club Books 1994.)

Bibliography

Ausubel, J. H. 1992. Industrial ecology: Reflections on a colloquium. Proceedings of the National Academy of Sciences 89(3):879-884.

Ausubel, J. H. 1991. Energy and environment: The light path.Energy Systems and Policy 15(3):181-188.

Ausubel, J. H., and C. Marchetti. 1996. Elektron: Electrical systems in retrospect and prospect.Daedalus 125(3):139-169.

Ayres, R. U. 1989. Energy Inefficiency in the US Economy: A New Case for Conservation. RR-89-12. Laxenburg, Austria: International Institute for Applied Systems Analysis.

Ayres, R. U. 1994. Information, Entropy, and Progress. New York: American Institute of Physics.

Banks, R. B. 1994. Growth and Diffusion Phenomena. Berlin: Springer-Verlag.

Bernardini, O., and R. Galli. 1993. Dematerialization: Long term trends in the intensity of use of materials and energy. Futures 25(4):431-148.

Boserup, E. 1981. Population and Technical Change: A Study of Long-Term Trends. Chicago: University of Chicago Press.

Curzio, A. Q., M. Fords and R. Zoboli, eds. 1994. Innovation, Resources, and Economic Growth. Berlin: Springer-Verlag.

Frosch, R. A. 1992. Industrial ecology: A philosophical introduction. Proceedings of the National Academy of Sciences 89(3):800-803.

Frosch, R. A. 1994. Industrial ecology: Minimizing the impact of industrial waste. Physics Today (November):63-68.

Graedel, T E., and B. R. Allenby. 1995. Industrial Ecology. Englewood Cliffs, New Jersey: Prentice-Hall.

Grübler, A. 1994. Technology. In Changes in Land Use and Land Cover, eds. W. B. Meyer and B. L. Turner. Cambridge, U.K.: Cambridge University Press, pp. 287-328.

Grübler, A., and N. Nakicenovic, eds. 1991. Diffusion of Technologies and Social Behavior. Berlin: Springer-Verlag.

Grübler, A., and H. Nowotny. 1990. Towards the fifth Kondratiev upswing: Elements of an emerging new growth phase and possible development trajectories. International Journal of Technology Management 5(4):431-171.

Herman, R., S. A. Ardekani and J. H. Ausubel. 1989. Dematerialization. In Technology and Environment, eds. J. H. Ausubel and H. E. Sladovich. Washington, D.C.: National Academy Press, pp. 50-69.

Hirschman, C. 1994. Why Fertility Changes. Annual Review of Sociology 20:203-233.

Homer-Dixon, T. 1994. The ingenuity gap: Can poor countries adapt to resource scarcity? Toronto: Peace and Conflict Studies Program, University of Toronto.

Kates, R. W. 1996. Population, technology and the human environment: A thread through time. Daedalus 125(3):43-72.

Kelley, A. C. 1988. Economic consequences of population change in the Third World. Journal of Economic Literature XXVI:1685-1728.

Lutz, W., ed. 1994. The Future of World Population Growth: What Can We Assume Today? London: Earthscan.

Marchetti, C. 1985. Nuclear plants and nuclear niches. Nuclear Science and Engineering 90:521-526.

Marchetti, C. 1989. How to solve the CO2 problem without tears. International Journal of Hydrogen Energy 14(8):493-506.

Marchetti, C., P. S. Meyer and J. H. Ausubel. 1996. Human population dynamics revisited with the logistic model: How much can be modeled and predicted?Technological Forecasting and Social Change 52:1-30.

Matthews, A. 1992. Where the Buffalo Roam. New York: Grove Weidenfeld.

Meyer, P. 1994. Bi-logistic growth.Technological Forecasting and Social Change 47:89-102.

Mitchell, B. R. 1980. European Historical Statistics 1750-1975, 2nd ed. New York: Facts on File.

Nakicenovic, N. 1989. Technological Progress, Structural Change and Efficient Energy Use: Trends Worldwide and in Austria, International Part. Laxenburg, Austria: International Institute for Applied Systems Analysis.

Nakicenovic, N. 1996. Decarbonization. Daedalus 125(3):95-112.

Organization for Economic Cooperation and Development. 1991. The State of the Environment. Paris: OECD.

Perez, C. 1983. Structural change and the assimilation of new technologies in the economic and social system. Futures 15(5):357-375.

Rogich, D.G., et al. 1993. Materials Use, Economic Growth, and the Environment, presented at the International Recycling Congress and REC ’93 Trade Fair. Washington, D.C.: U.S. Bureau of Mines.

Taeuber, I. B. 1958. The Population of Japan. Princeton: Princeton University Press.

Tsuneta Yano Memorial Society, ed. 1993. Nippon: A Chartered Survey of Japan, 1993/1994. Tokyo: Kokusei-Sha.

Turner, B. L., II, R. W. Kates, J. F. Richards, J. T. Matthews and W. B. Meyer. 1990. The Earth as Transformed by Human Action. New York: Cambridge University Press.

United Nations Population Division, Department of Economic and Social Information and Policy Analysis. 1994. World Population Prospects: The 1994 Revision. New York: United Nations.

U.S. Bureau of the Census. 1975. Historical Statistics of the United States, Colonial Times to 1970. Washington D.C.: U.S. Government Printing Office.

U.S. Bureau of the Census. 1993. Statistical Abstract of the United States: 1992, 112th ed. Washington, D.C.: U.S. Government Printing Office.

U.S. Bureau of Mines. 1990. The New Materials Society. Volumes I-III. Washington, D.C.: U.S. Government Printing Office.

U.S. Environmental Protection Agency. 1992. Characterization of Municipal Solid Waste in the United States: 1992 Update. Final Report, No. 530-R-92-019. Washington, D.C.: Environmental Protection Agency.

U.S. Geological Survey. 1993. Estimated Use of Water in the United States in 1990. Circular 1081. Washington, D,C.: U.S. Government Printing Office.

U.S. Geological Survey, National Water Summary 1987-Hydrologic Events and Water Supply and Use. Water Supply Paper 2350. Washington, D.C.: U.S. Government Printing Office, 1987, pp. 81-92.

Waggoner, P E. 1994. How Much Land Can Ten Billion People Spare for Nature? Ames, Iowa: Council for Agricultural Science and Technology.

Wernick, I. K., and J. H. Ausubel. 1995. National materials flows and the environment. Annual Review of Energy and the Environment 20:463-492.

Wernick, I. K., R. Herman, S. Govind and J. H. Ausubel. 1996. Materialization and dematerialization: Measures and trends.Daedalus 125(3):171-198.

Jesse H. Ausubel is director of the Program for the Human Environment and Senior Research Associate at The Rockefeller University and a program officer of the Alfred P. Sloan Foundation in New York City. From 1977 to 1988, he was associated with the National Academy complex in Washington as a fellow of the National Academy of Sciences, then as a staff officer with the National Research Council Board on Atmospheric Sciences and Climate, and finally as director of programs for the National Academy of Engineering, where he developed and oversaw studies on the performance of technology-intensive sectors of U.S. industry and on the diffusion and globalization of technology. In recent years, he has helped originate industrial ecology, the study of the networks of flows of materials and energy in industry. Educated at Harvard and Columbia, Ausubel served on the U.S. Environmental Protection Agency’s Science Advisory Board. Address: Program for the Human Environment, The Rockefeller University, 1230 York Avenue, Box 234, New York, NY 10021-6399. Internet: phe@mail.rockefeller.edu.